When a PedroCLI Stops Being a CLI

More about The Agent that is better than a crustacean

What started as a CLI to kick off background jobs quietly turned into something much bigger.

PedroCLI is no longer “just a CLI.” It’s the foundation of a personal AI agent. It’s now an agent where the same logic can be accessed from multiple interfaces (CLI, web UI, mobile dictation). The funny thing is, this became less of an AI engineering task and more of a systems engineering task very quickly. It was not just a hand-it-off-to-AI-and-go, we had to worry about shared state, parallel jobs, DRY (Do Not Repeat Yourself) engineering, and race conditions. Basically, all the fun things 😊.

How did we handle these agentic processes?

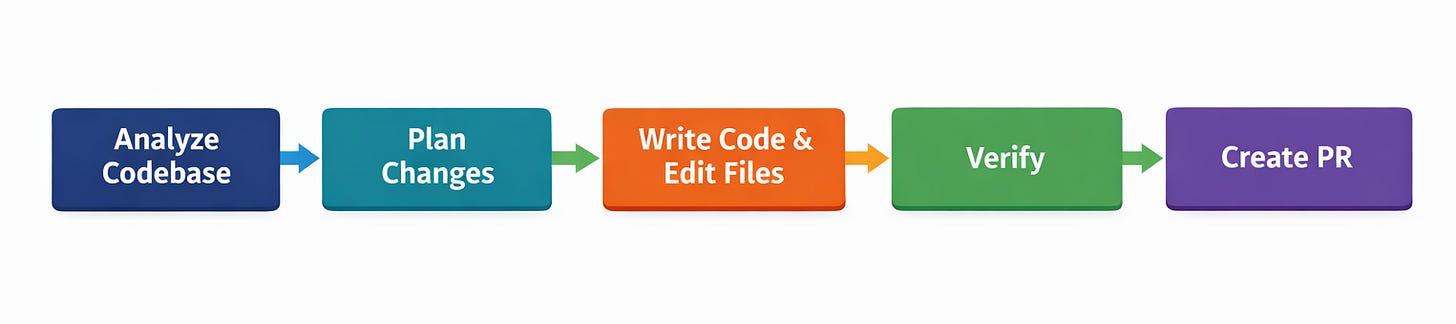

The agent proposes actions, executes steps, and reports outcomes. The system tracks the outcomes, manages allowed tools, and passes context between the phases in the workflow. That’s why the workflow looks less like a conversation and more like a state machine: this is the step you’re on, this is what you’ve done, execute the next action, and tell me when it’s complete.

That distinction is the difference between an impressive demo and something I can actually trust.

The First Wrong Assumption: “The Agent Will Know When It’s Done”

Early versions of Pedro assumed something deceptively simple: the agent would know when it was finished. Anthropic has written about how agents perform better when they’re allowed to retry failed actions and recover from errors, rather than being forced into single-shot execution. What’s often missed is that these retries are still bounded by system-defined success and failure conditions—something I only appreciated after trying to build this myself.

You have to remember that agents are only as good as the instructions they are given. When I started creating the coding agent, what I imagined was that I could just give it a task and a system prompt that had tool calls and a 30-round iterations process, and it would just give me a PR. Well, that didn’t work. Due to context windows, loss instructions, and other things that happen with compaction, there were too many tool calls and too many system prompts for the Ollama and 30b models I was using. Being the problem-solver I am, I pivoted. I bound the context to phases and allowed the agent to make a subset of tool calls for each phase

Why Background Jobs Forced Real Architecture

Once I accepted that the agents were not all-powerful, I was able to actually think about a cohesive system that enables agentic execution.

If work was going to happen asynchronously (while I was offline, on my phone, or doing something else), I needed answers to all these boring questions:

Is this job still running?

What step is it on?

What has already completed?

What failed, and why?

Can I retry only the failed part?

Those aren’t agent questions, they’re systems questions. The system needed a simple orchestrator that kept track of steps. So we built that in Go, well, we vibe-coded it 🤪.

So in our Orchestrator, we used the Job Progress object to keep track of the status that at the end of each phase was updated to the state system. This state was carried across jobs and updated until the job was completed:

type JobProgress struct {

JobID string

Phase string

Status Status

StartedAt time.Time

UpdatedAt time.Time

Error string

}This increased the agent’s overall accountability

.

Once jobs had a lifecycle, state, and artifacts, Pedro stopped being “an agent that runs” and became an agent operating inside a system.

The Agent Is Not the System

This was an uncomfortable realization for me.

It’s tempting to make the agent the center of everything — the planner, the executor, and the decider. But the more responsibility I gave the model, the less desirable the outcomes became. AI is revolutionary and it is amazingly powerful and may seem to have limitless potential, but at the end of the day it’s still just a tool. When I was using that tool as the whole system instead of as part of the system, I lost all visibility into what was happening, and I lost my ability to debug. When something failed, I couldn’t tell whether it was reasoning, prompting, tooling, state, or execution.

So I inverted the relationship.

The system defines what a job is, what steps exist, what counts as progress, and what completion actually means. The agent operates freely inside those constraints.

That shift alone eliminated entire classes of bugs simply, but because narrowing its world view maximized its potential. It still does tool calls, decides what code to write, what the structure of the blog post is, or creates a podcast outline in whatever order it wants, but context, tools, and prompts are not phase-scoped. Although they are informed by the steps’ output, they do not need to carry the whole context of the interaction with them.

Visibility Changed Everything

Agents don’t understand “done” unless the system defines what that means. They generate language that sounds complete because that’s what they’re optimized to do. Without structure, “I’m finished” is just another string of tokens. I learned this the hard way.

Jobs would run for minutes and then confidently report success without producing anything actually usable. It gave me no files written, no commits, no PRs, or literally any observable side effects. One time it said: “Oh, the system prompt gave me json, this means the code what generated, work done.”From the agent’s perspective, it felt done, but from the system’s perspective, nothing had happened.

The gap between narrative completion and actual outcomes is where reliability quietly dies.

Once that feedback loop existed, my behavior changed. I stopped over-prompting or blaming models. because most failures weren’t AI problems at all— they were architectural mistakes that only became obvious once I could observe the system honestly.

The Workflow That Finally Made Sense

At some point, I stopped thinking in terms of “an agent running” and started thinking in terms of a workflow the agent operates inside.

The important part isn’t the number of phases, the number or iterations, or the number of tool calls. I even made some phases optional based on instruction so the agent can “elect” to skip a process if needed. The important part is that now I can tell what the agent is doing and then debug if needed; I know context is not being lost, and I know that progress is being made in the task.

Progress is owned by the system, not the agent.

Why This Became a Real Learning Project

If you only interact with agents through chat interfaces or third-party frameworks, it’s easy to believe better reasoning is the solution to everything.

Building PedroCLI taught me the opposite.

Nearly every failure I encountered had nothing to do with intelligence. They were failures of state management, execution semantics, termination conditions, observability, and ownership of control flow, and those are software engineering problems.

This project forced me to stop treating AI as magic and start treating it as what it actually is: a probabilistic component embedded inside a deterministic system. Once you do that, the questions change.

You stop asking, “How do I make the agent smarter?”

You start asking, “How do I make the system safer?”

Where This Takes Us Next

Even after jobs and state were explicit, things still stalled. Jobs claimed completion early.

Tool calls failed intermittently. Agents looped.

The problem wasn’t thinking; it was that the agent didn’t know what step it was allowed to take next.

When I finally realized that, I started looking directly at workflows.

In the next post, I’ll walk through why self-iterating agents break down under real load and how introducing phased workflows fundamentally changed reliability, especially on local, quantized models.

If you’ve ever felt like an agent was almost dependable but never quite trustworthy, that’s where things finally start to come together.

Stay Connected

If you want to follow along as this system continues to evolve:

YouTube: https://youtube.com/@soypete

Discord: https://discord.gg/soypete

Twitter / X: https://twitter.com/soypete

O’Reilly Go Programming Course: https://tr.ee/lbgNjvyc6f

I’m documenting this project as it grows, including the parts that don’t work yet. If you’re building agents, tooling, or just trying to make AI behave predictably in your own workflow, you’re very much the audience for this.

More to come.

Newsletter Highlights

Recent posts you might have missed: