Why I Hate the Term “Context Engineering”

(and Why Everyone Is Doing It Wrong)

TL;DR

We keep treating “context engineering” like prompt tweaking or a new job title, and that’s why agents keep failing in predictable ways. AI systems don’t bring context with them. They start empty, then probabilistically act on whatever we expose. The real work isn’t letting models “figure it out;” it’s deterministically engineering how scoped data, semantics, tools, and instructions enter the system so we can shape outcomes without handing the model all the information in the world. Context engineering at its core is systems design, and most people are doing it wrong because they’re focused on prompts instead of data.

I want to talk about context — not prompts, not tokens, not “agent magic,” but context.

And I’m going to start with an analogy I’m stealing from my friend Tod Hansmann, who dropped this gem at a meetup:

The difference between American cinema and Japanese cinema is context.

In American cinema, there’s an expectation that you come with a shared cultural background. Certain stories can only be told through certain lenses. You’re expected to already know how to read what’s happening.

In Japanese cinema, the assumption is the opposite: you come with no context.

That’s why the same story can be told with children, adults, witches, demons, or aliens — it doesn’t matter. The story is portable. The system doesn’t rely on the audience to bring the meaning with them.

And this is exactly where we are getting AI wrong.

We Expect AI to Bring Context — and That’s the Bug

We expect AI to know everything, and that expectation is wrong.

Go back to GPT-3 and GPT-3.5 — the pre–tool call era. All the model had was:

The text you gave it

Statistical patterns from training

A next-token prediction loop

There was no validation or math or summation. There was no task execution. It could not do anything, it could only predict what came next based on patterns. Fast forward to today, and AI providers layered tool calls on top of that foundation. Now we have access to web search, plugins, code execution, andAPIs. This is what enabled “agents.”

Now when the model predicts a sequence that requires more information, it can go get it. And here’s where the American-cinema assumption sneaks in. We assume that because tool calls exist, the model is bringing context with it.

It isn’t.

The agent still starts with the same amount of context as before, but it now has the ability to fetch more, and that distinction matters more than people realize.

Multi-Bot and Plugin Failures Were Predictable

These systems failed because they had the entire world open to them.

They could link information they shouldn’t,

perform operations they shouldn’t, cChain tools in ways no one intended, and

act on data with no semantic constraints. That wasn’t an agent problem. It was problem solved by engineering, and that problem was a context problem.

In theory, context engineering could have prevented almost all of it (a little bit of programatic guardrails never hurt anybody, am I right?). But instead of refining what the system was allowed to see and do, we handed it everything and hoped it would figure it out.

That was never going to work.

The Prompt was not the Problem

This is also why you don’t hear much about prompt engineering anymore.

Prompt engineering was about dumping context upfront by explaining what you want done, encoding policy directly, and then hoping the model would get it.

That falls apart the moment agents enter the picture, and what replaced this process is people now call context engineering.

Context engineering decides what information is introduced and when, how long it persists, and what gets dropped between steps. Both tool calls and results are context, and so is injected data.

BUT...I hate the term “Context Engineering.”

I hate it because it sounds like a role. It isn’t. It’s a skill. You don’t hire an infrastructure engineer who doesn’t understand DevOps. Someone who can only SSH into prod and run bash scripts isn’t an SRE, they’re a sysadmin. DevOps is a skill, not a title.

Context engineering is the same thing. Every AI engineer needs this skill. And the reason I hate the term is because I don’t think we actually understand what it actually means yet.

The Real Issue: We Don’t Know How to Use Data In the Moment

“Oh Miriah I know how to use data, I just read it from the database!”

Wrong. Do you ever just give it to the user as-is? That is what I thought. As af developer, you use the data to present a set of information in a palatable way. That is context engineering.

Historically, engineers use data in two ways:

Analytics — analyzing what already happened

State — recording what already happened

Even machine learning training is retrospective. It’s always history layered on history. We are bad at using data during execution, which is why online inference never really worked the way people hoped. And that’s why I dislike how context engineering is framed today. People still think it’s about “making the prompt better.”

It isn’t.

It’s about how you introduce data — securely, reliably, and with meaning — into a live system. If I could start any company right now, it would be one that makes this easier because it’s genuinely hard.

System Prompts Are Products, Not Scratch Pads

System prompts should not be constantly rewritten. They are the product you ship as an AI engineer. Once they are live and out of active development, their structure should change very rarely. They should be designed intentionally, released deliberately, and left stable. When I say “stable,” I don’t mean static though. A system prompt can absolutely be a template. You can inject values at runtime or parameterize it. You can even swap in scoped instructions. That’s fine —everything inside a system prompt should be deterministic.

But that’s the boundary. What happens after that boundary is probabilistic. Context engineering exists to shape those probabilities, not to redefine behavior.

You don’t make the model deterministic, you make the inputs deterministic. That’s the contract.

More Instructions Don’t Fix Context — Timing Does

I see this failure mode constantly with coding agents like Claude Code.

When people want the agent to behave better, their instinct is always the same: add more. But adding more information does not solve the problem, it makes it worse. The mistake we make is putting everything into history.

The closer information is to the system prompt or the beginning of the conversation, the less likely it is to be used as the plan evolves.

These models — even reasoning models — can only hold so much context at once because they are prediction systems. No matter how many instructions you provide, some of them will not be used in the next prediction, and information will be selectively ignored for the purpose of finishing it’s current task. That’s why context engineering is never about more information,. it’s about the right information, at the right time, in the right place.

With coding agents, context engineering is literally typing into the prompt window. That’s what editors and assistants are doing when they surface a file, a diff, or a symbol — they’re saying “now is the moment this information matters.”

Compaction is also a context engineering technique. Compaction trades recent conversational detail for proximity to the original instructions. You lose history, but you don’t lose context engineering because context engineering is still the user’s job.

And this is exactly what background agents and fully autonomous workflows lack: a human deciding what information matters right now. That’s why those systems have to be engineered so carefully up front.

What Context Engineering Actually Is

Context engineering is the disciplined provision of:

Tool calls

Tool results

Scoped supplemental data

Semantic and relationship information

Explicit task instructions

Raw data without semantics is useless. Bigger models don’t fix that. More autonomy doesn’t fix that.

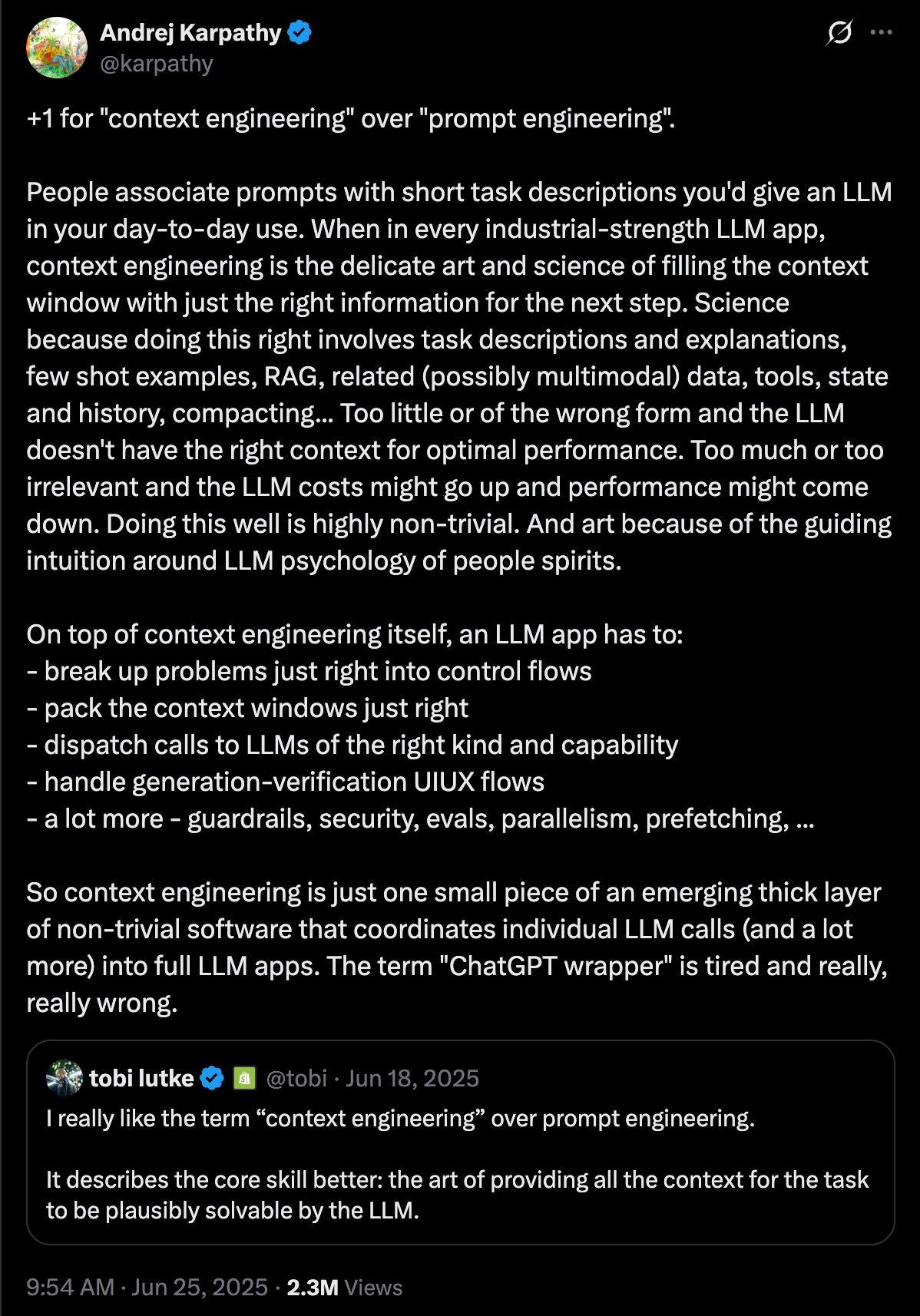

https://x.com/karpathy/status/1937902205765607626?s=20

We already saw what happens when agents can do “anything.” Anything includes the things you absolutely did not want them to do. Context engineering is not about letting AI figure it out, it’s about refining its purpose.

From Magic to Software

Once you treat context engineering as structural management, agents stop looking like fragile demos and start looking like automation.

They become predictable, scoped, and auditable.

At that point, agents aren’t magic anymore — they’re software. Stop letting AI “figure it out,” and start engineering how data with meaning enters the system.

My Courses

Stay Connected

Want to stay updated on what I’m working on? Here’s where you can find me:

Newsletter Highlights

Recent Posts You Might Have Missed

Upcoming Events or Streams

I’m also active in the Utah tech community. If you’re local, come build with us. Otherwise catch us on Twitch

Latest Podcast Episode

Deep dives on AI infrastructure, home labs, and production engineering.

Latest episode:

What AI Hardware Should You Buy? Memory, Backends (CUDA/ROCm/Metal), and Scaling at Home | Ep 3